What Happens When AI Tries Your Product First

Synthetic Test Users: Accelerating Research with AI

Fast signal is good… but don’t mistake it for truth.

You’ve seen the pitch.

AI “users” that run through your flows, stress-test your designs, and hand you insights before you even recruit a real participant.

It sounds efficient. Scalable. Almost magical.

But here’s the problem: synthetic users can only mimic behavior, not feel it.

They’ll find broken links, skipped steps, even predict likely confusion.

What they’ll miss are the parts that actually define an experience: the hesitation before hitting “Pay,” the sigh when copy doesn’t make sense, the spark of trust when something just works.

Synthetic testers can’t feel. And UX, at its core, is about feeling.

What Synthetic Test Users Actually Are

Where They Help

Where They Fail

UXCON25 Spotlight: The Future of Testing

Building a Human + AI Testing Stack

How to Present Findings Leaders Will Believe

Resource Corner

What Synthetic Test Users Actually Are

In plain words: they’re AI-generated participants.

Instead of inviting a human into a lab, you generate a model that simulates how a user might behave. You can even tell it: “Act like a college student trying to pay rent through this app” or “Simulate a busy parent shopping on their phone while distracted.”

The AI runs through your flows, clicks buttons, and “reports” friction points. Think of it as a crash test dummy for UX.

But just like a dummy can’t tell you if the seat feels uncomfortable, a synthetic tester can’t tell you if your onboarding copy feels condescending.

Where They Help

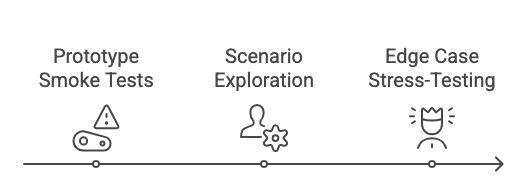

Synthetic testers are most powerful in the early, messy phases:

Prototype smoke tests

Before spending $1,000 recruiting humans, let AI flag dead ends and missing steps in your Figma prototype.Scenario exploration

Want to know how your flow holds up for multiple personas? Prompt synthetic users to “act” like each one and run through scenarios.Edge case stress-testing

A real user might not test “wrong passwords 10 times in a row.” An AI can hammer that flow instantly.

They save money. They save time. They make human research sharper because you’re not wasting humans on obvious flaws.

Where They Fail

But here’s the catch:

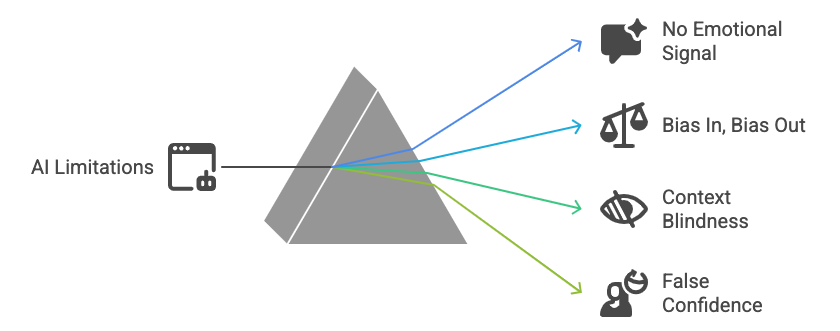

No emotional signal

A synthetic tester can’t show hesitation before committing to a subscription.Bias in, bias out

If your prompt says “Act like a power user,” you’ll get an idealized behavior, not messy reality.Context blindness

Real people juggle kids, Slack notifications, and half-baked memory. Synthetic users won’t.False confidence

1,000 AI test sessions can look impressive in a deck… but if not validated with real people, it’s just noise at scale.

This is where teams get burned: they trust synthetic outputs as “evidence,” then wonder why the feature still flops.

UXCON25 Spotlight: The Future of Testing

What happens when AI is your first user?

At UXCON25, we’ll explore what it means to design in a world where AI isn’t just a tool - it’s a collaborator, a test subject, and sometimes the first “user” of your product. Come learn how to design for humans in an era where AI shapes every interaction

Thanks to Great Question’s sponsorship, tickets are more affordable than ever, the barrier drops, but the value doesn’t.

back to where we stopped…..

Building a Human + AI Testing Stack

The best approach isn’t to replace humans, but to layer methods:

Synthetic first

Use AI to prune bad ideas fast. Catch broken links, bad flows, and missing steps.Human next

Bring in real participants to capture nuance — emotion, tone, trust, frustration.Quantify

Use analytics or surveys to measure how widespread an issue really is.Close the loop

After changes, run both synthetic and human tests again to ensure fixes worked.

This sequence saves time without sacrificing truth.

How to Present Findings Leaders Will Believe

Your execs don’t want a debate about “AI vs human.” They want to know: Can I trust this decision?

Frame it like this:

The decision: “Ship Variant B.”

The hybrid evidence: “Synthetic flagged navigation confusion, 6/8 humans confirmed.”

The business tie-in: “This fix may reduce abandonment by 12%.”

The caveat: “Synthetic can’t capture tone — human testing covered that gap.”

It’s not about the method. It’s about risk reduction and clarity.

Resource Corner

Final Thought

Synthetic users are a tool not a truth machine.

They’ll help you move fast, save money, and stress-test the basics.

But only real humans can reveal how it feels to trust your product.

So use AI to clear the path. Then let humans show you if it’s worth walking.